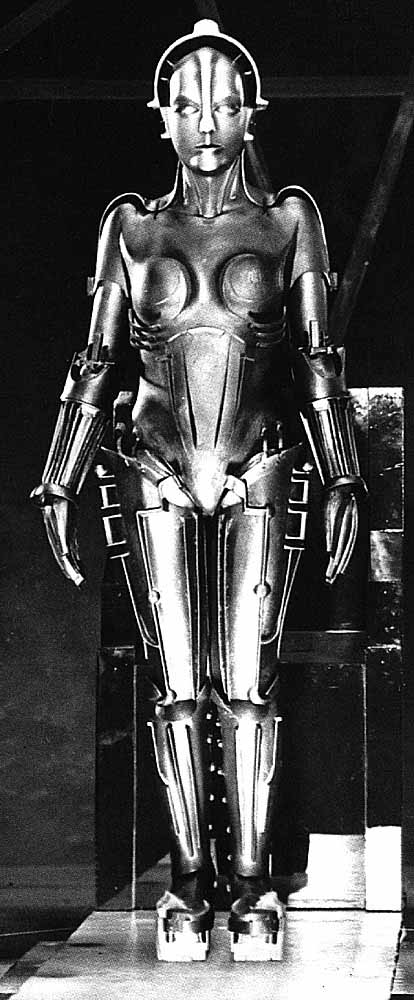

Intelligent robots have been part of the SciFi lexicon since before Fritz Lang’s seminal file Metropolis. While it’s fun to imagine what the world would be like with intelligent machines, the sad truth is that “strong” Artificial Intelligence (AI) will probably never happen.

Intelligent robots have been part of the SciFi lexicon since before Fritz Lang’s seminal file Metropolis. While it’s fun to imagine what the world would be like with intelligent machines, the sad truth is that “strong” Artificial Intelligence (AI) will probably never happen.

I recently read an interview in The New York Time Magazine recently interviewed Ray Kurzweil, who’s made a 40-year career out of predicting that strong AI (i.e. a machine that can pass the Turing Test) is just around the corner.

Unfortunately for Kurzweil, while digital computers are exponentially faster than they were in the 1970s, they’re marginally more intelligent (if at all, depending upon how you define the term) they were 40 years ago.

Apple’s Siri, for example, is only marginally better at deciphering human speech than programs that were running decades ago, and no program–even those running on multiple supercomputers–have the ability correctly interpret idioms or put successive sentences into context.

What passes for AI today is the result of incremental (and relatively minor) changes to pattern recognition algorithms and rule-based programming concept that have been around for many years. While there are plenty of programs that use these algorithms, “strong” AI (in the sense of a computer than can think like a human being) is junk science.

While digital computers can do brute force calculation that allow them, for instance, to win at chess, the domains in which such programs operate are perfectly defined and therefore utterly unlike the real world.

That hasn’t stopped Kurzweil and his ilk from making predictions, year after year, decade after decade, promising that AI is just over the horizon (“within 20 years” is the typical mantra).

Meanwhile, there have been no breakthroughs in AI and no potential breakthroughs in sight. This situation is unlikely to change. In fact, AI (in the sense of actually passing a Turing Test) may simply not be possible using digital computers.

The Times interview mentioned Kurzweil’s most recent prediction that “by 2045, computing will be somewhere in the neighborhood of one billion times as powerful as all the human brains on earth.”

The implication, of course, is that computers will be “smarter” than humans, if not now, then certainly by that date. However, that argument hinges the assumption that digital computers and human brains are similar.

They’re not. Not even slightly. In fact, a single human brain is more complicated than all the computers in the world today. Let me explain.

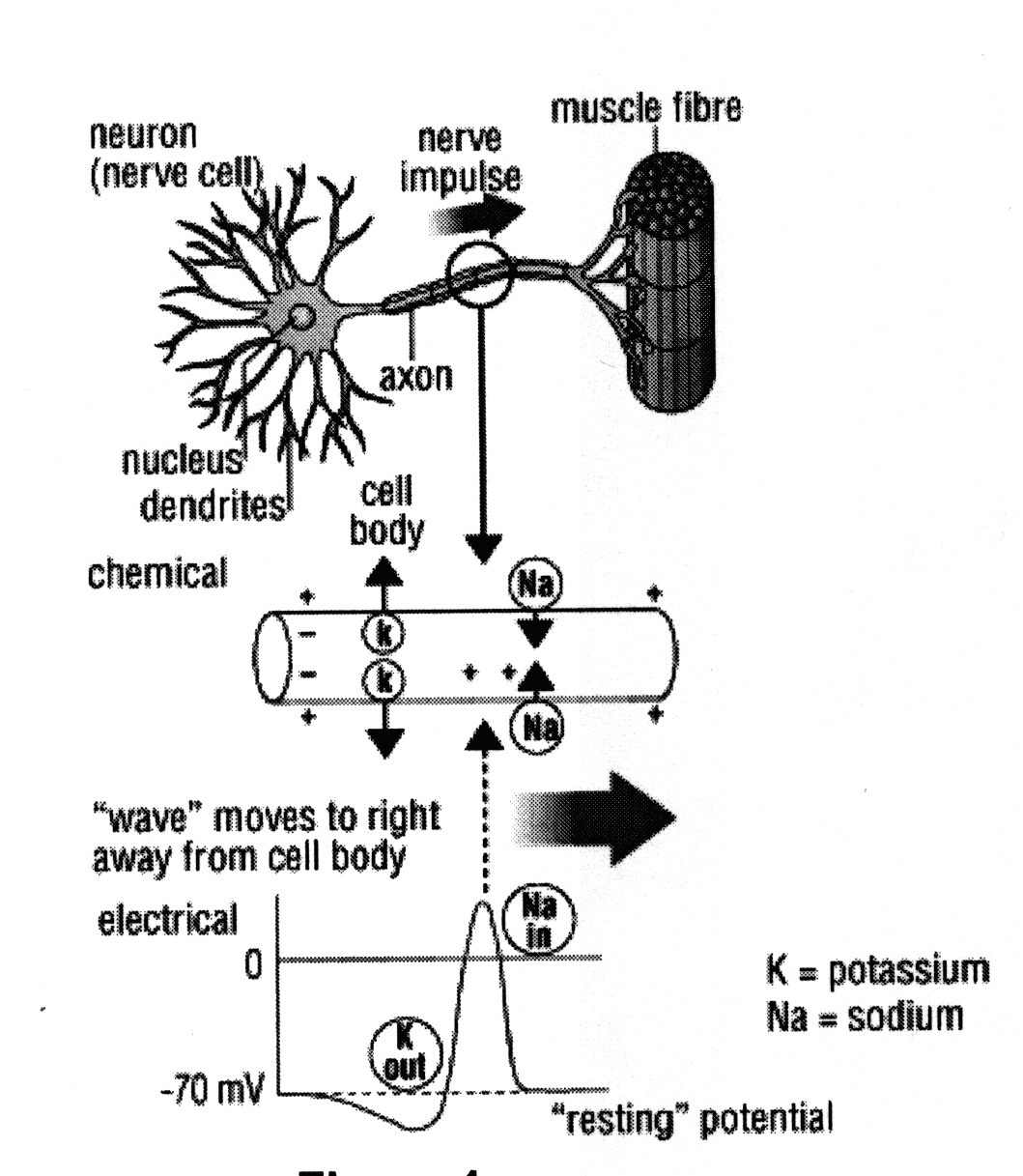

The human brain consists of about 100 billion neurons. If each neuron were a “bit” (as in a computer memory) that would represent less than a gigabyte of memory. But neurons aren’t “bits”; they’re massively complex potentiometers.

In the human brain, information travels from neuron to neuron (through axons) in the form of a wave. While the wave form has a valley and peak that are superficially similar to the Off/On state of a bit, wave forms can actually have “n” number of potential states.

Neurons, in other words, are exponentially more complex than bits, a fact that makes the human brain infinitely more complex than any digital computer.

For example, with three bits you can represent any value between 0 and 7 (i.e. 000, 001, 010, 011, 100, 101, 110, and 111.) With three potentiometers, by contrast, you can represent millions of values.

Think of it this way. Suppose each potentiometer can represent a fractional value between 0 and 1 (including 0 and 1) in, say, increments of 1/1000th. The number of discrete states that only three such potentiometers can represent is therefore 1 billion (i.e. 1,000 * 1,000 * 1,000). The complexity increases exponentially with the number of potentiometers.

In other words, the 100 billion neurons in a single human brain are inconceivably more complex than 100 billion bits, or even a billion * billion * billion bits. Because of this, it’s highly improbable (and probably impossible) that the human brain can be effectively modeled on a digital computer.

Recent Comments